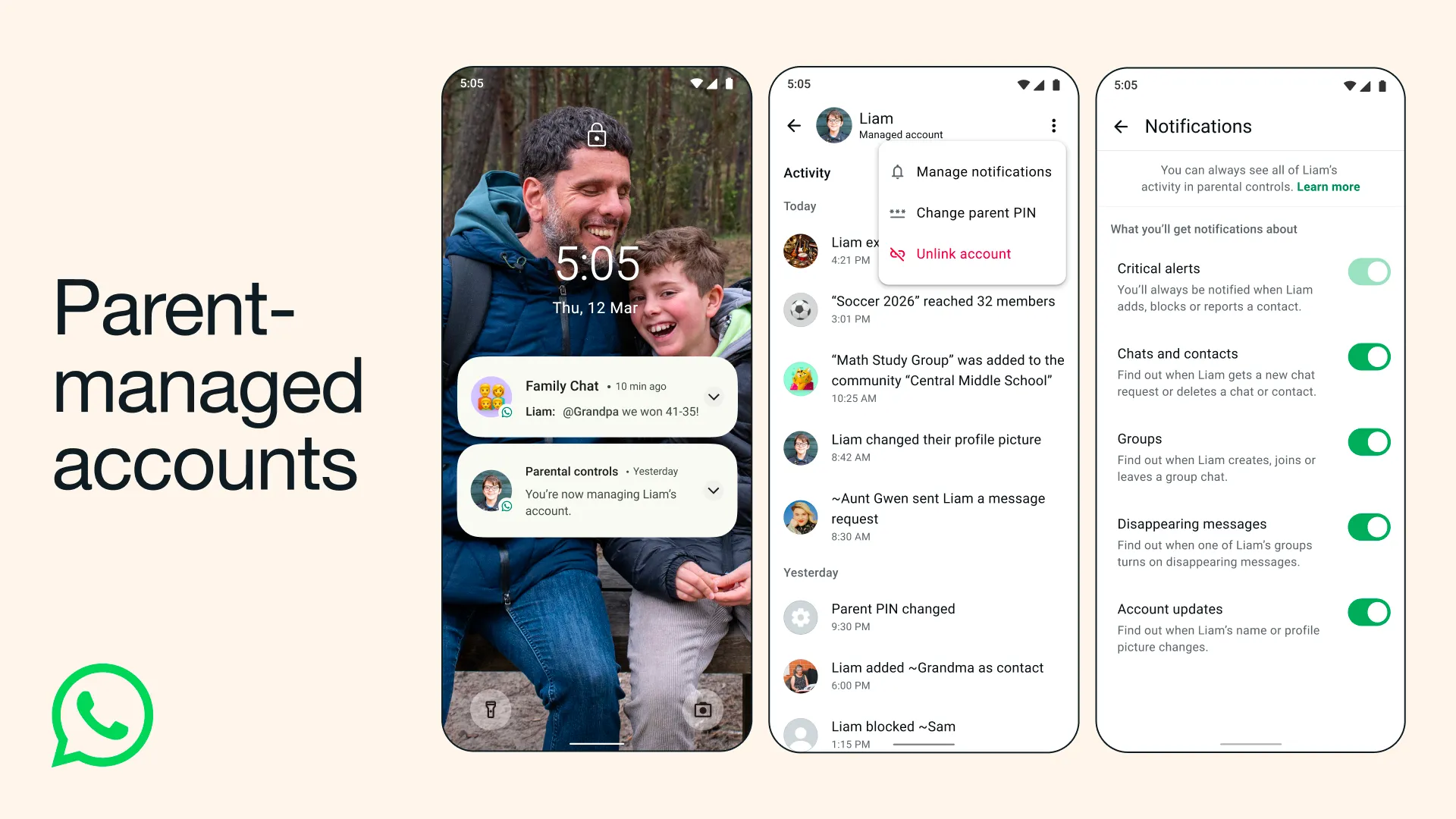

Credits: Whatsapp

Meta has quietly rolled out one of its most parent-friendly features yet: teen accounts on WhatsApp, with a specific configuration for children under 13. The feature lets parents create and manage a WhatsApp account on behalf of their pre-teen, keeping full visibility and control over who the child talks to and what they can do on the app.

It is a significant shift for a platform that has historically been adults-only territory, at least in policy.

What the Feature Does

Parents who set up a supervised account for their pre-teen get a few concrete controls. They can approve or block contact requests before anyone gets through to their child. They can restrict who can message the pre-teen, limit the visibility of their profile photo and status, and disable certain features entirely. The child’s account is also automatically enrolled in WhatsApp’s stricter privacy defaults.

There is also a companion view for parents, letting them see who their child is connected to without reading actual message content. WhatsApp has been careful to maintain end-to-end encryption even within supervised accounts, so parents cannot read conversations. The oversight is structural, not surveillance.

For teens between 13 and 17, WhatsApp launched a version of this earlier, but the under-13 configuration is newer and more restrictive, which makes sense given that most countries set 13 as the minimum digital consent age.

Why Now?

The timing is not accidental. Tech companies have been under sustained pressure from regulators and parents’ groups over child safety on social platforms. In the UK, the Online Safety Act has been tightening its grip. In the US, several states have passed or proposed laws restricting minors’ access to social media. The EU’s Digital Services Act also has child protection requirements baked in.

Meta has been trying to get ahead of this wave. Instagram launched teen accounts with parental supervision last year, and WhatsApp is now following the same playbook. The logic is clear: if children are going to use these apps anyway (and they are), it is better to offer a regulated path than to leave parents with no visibility at all.

There is also the question of age verification. WhatsApp, like most platforms, has struggled to enforce its own minimum age requirements. This feature does not solve that problem entirely, but it changes the dynamic. Instead of pretending under-13s do not use WhatsApp, Meta is formalising their presence with guardrails attached.

What Parents and Critics Are Saying

The response has been mixed. Many parents welcome the idea of having some structural control, especially for children who are already messaging classmates and family members on the app. The ability to filter contact requests alone addresses one of the more common concerns, which is strangers reaching children without any friction.

Critics argue the feature does not go far enough. Child safety advocates have pointed out that structural controls do not substitute for media literacy or the harder conversations about online behaviour. There is also the concern that normalising WhatsApp use for pre-teens, even in a supervised form, brings younger children into an environment that was not designed with them in mind.

Some privacy advocates have raised a different concern: the parental oversight dashboard creates a record of a child’s social connections, and questions remain about how that data is stored and what Meta does with it.

What This Means for African Users

In markets like Nigeria, WhatsApp is not just a messaging app; it is infrastructure. People use it for business, school groups, family coordination, and community updates. Children have been part of those groups for years, often without any formal account structure.

This feature has real relevance here. Many Nigerian parents already share devices with younger children or have set up informal accounts for them. A supervised account model gives those arrangements a more structured layer of safety, even if enforcement will depend entirely on parents actually using the tools.

The challenge is adoption. WhatsApp’s parental controls are only as effective as the parents who configure them. In contexts where digital literacy varies widely, the existence of a feature and the actual use of it are two very different things. Meta will need to do more than launch the feature; it will need to actively communicate how it works in the markets where it matters most.

The Bigger Picture

WhatsApp’s pre-teen accounts are part of a broader reckoning across the tech industry with how platforms handle younger users. The days of “minimum age 13, no verification required” are numbered. Whether driven by regulation or reputational pressure, companies are being pushed to build real accountability into the product.

Whether supervised accounts are the right answer is still an open question. But they represent something: an acknowledgment that children are already on these platforms, and that responsibility for their safety cannot be left entirely to chance.

For now, parents with children approaching or already at messaging age have one more tool available. It is not a complete solution. But it is more than what existed before.

and then

and then