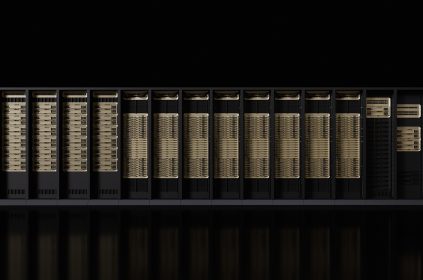

In a bold move to cement its dominance in the burgeoning AI chip market, Nvidia has unveiled its latest GPU architecture, Blackwell. This cutting-edge design promises to redefine the boundaries of what’s possible in large-scale AI training and inference workloads.

The Blackwell architecture represents a significant leap forward, delivering an astounding 20 PetaFLOPS of AI performance – a 4x improvement over the previous generation Hopper GPUs. But the true marvel lies in its unparalleled energy efficiency, boasting up to 25x better power consumption compared to its predecessor.

Consider this: training a model the size of GPT-4, a task that would have required 8,000 H100 chips and 15 megawatts of power, can now be accomplished with just 2,000 Blackwell chips and a mere 4 megawatts. This level of optimization is nothing short of game-changing, ushering in a new era of cost-effective and environmentally-conscious AI computing.

While Nvidia’s competitors may view Blackwell as mere “repackaging,” industry analysts see it as a strategic masterstroke. By continuously pushing the boundaries of performance and efficiency, Nvidia is solidifying its position as the go-to partner for enterprises and cloud providers seeking to harness the transformative power of generative AI.

ALSO READ: Ethiopia’s Largest Bank Recoups $10 Million of $14 Million Overpayment

Blackwell’s second-generation transformer engine, which reduces the precision of AI calculations from 8-bit to 4-bit, is a particular highlight. This innovation effectively doubles the computational capacity and model sizes that can be supported, opening up new frontiers for large language models and other cutting-edge AI applications.

Crucially, Blackwell’s plug-and-play compatibility with Nvidia’s existing H100 solutions offers a seamless upgrade path for organizations already invested in the company’s ecosystem. This ensures a smooth transition, allowing customers to future-proof their AI infrastructure without disrupting ongoing operations.

The announcement of Nvidia Inference Microservices (NIM) further reinforces the company’s commitment to making AI deployment more accessible. By providing a suite of pre-built models and turnkey tools, NIM empowers businesses of all sizes to integrate state-of-the-art AI capabilities into their products and services rapidly.

As the race for AI supremacy intensifies, Nvidia’s Blackwell architecture solidifies its position as the undisputed leader in the field. By continually pushing the boundaries of performance, efficiency, and accessibility, the company is poised to shape the future of artificial intelligence for years to come.

and then

and then